Drum & Vase

LLM Music Generator

Drum & Vase is an LLM music generator that extends AI to understand its sense of place in the environment. It is a reflection on how we understand our environments through our senses, and allows us to be curious about what a place "sounds" like. It recreates the idea of environmental ambience, and brings LLMs closer to human understanding.

In Drum & Vase, I wanted to extend the senses of Large Language Models to understand and generate a sense of place within our environments — generating compositions of music that reflect the dynamic character of a space. This opens up opportunities for AI to "read the room," and even develop emotional connections to a location. Now we can begin to ask: What if our environments could respond to us?

How It Works

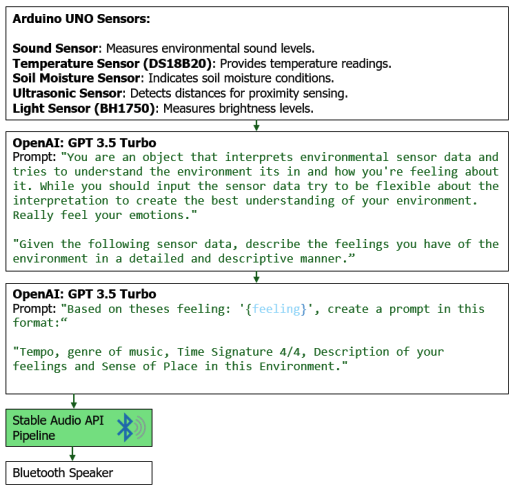

Drum & Vase takes passive inputs from Arduino sensors — Sound, Soil Moisture, Ambient Light, Proximity, and Temperature — and sends values via serial to prompt the OpenAI and AudioCraft APIs.

An mp3 file is produced and automatically sent to a Bluetooth speaker within the Large Language Object (LLO).

The constant looping of audio and the dynamics of the environment transform the sound over time, creating a living environmental ambience.

System diagram — sensor inputs to audio output pipeline

Model Documentation